The Separation Principle

What Claude’s Agent Skills Architecture Actually Means

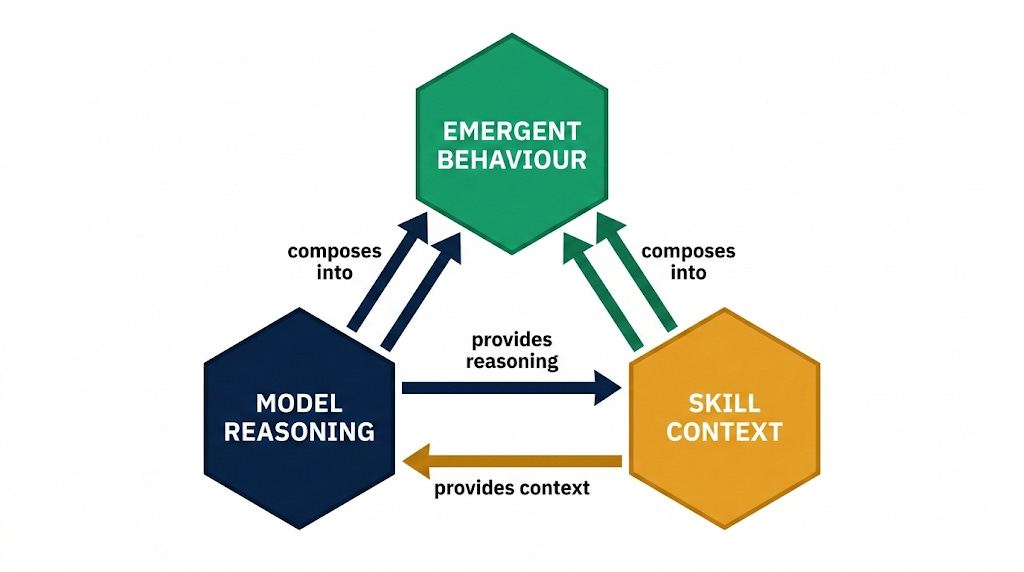

The Agent Skills architecture represents a proof of concept for something the AI industry has been converging toward without quite naming: the separation of model capability from model knowledge. The model provides reasoning; the skill provides context; the composition produces behaviour that neither could generate alone. This is not a feature distinction. It is an architectural argument, one that challenges a decade of assumptions about how specialised AI capability should be built and delivered.

The most recent and concrete demonstration of this argument is Claude’s Agent Skills system, introduced by Anthropic in 2025 and, until recently, poorly understood even within technical circles. The dominant assumption has been that Skills operate like traditional function calls or code execution modules. As Han Lee, a machine learning engineer who reverse-engineered the system, documented in October 2025, that assumption is wrong.

Skills are prompt-injection templates that modify both the conversational context and the execution environment without writing or running a single line of code. What looks from the outside like a tool invocation is, under the surface, a mechanism for loading domain-specific instructions directly into Claude’s reasoning context and scoping the model’s tool permissions to match.

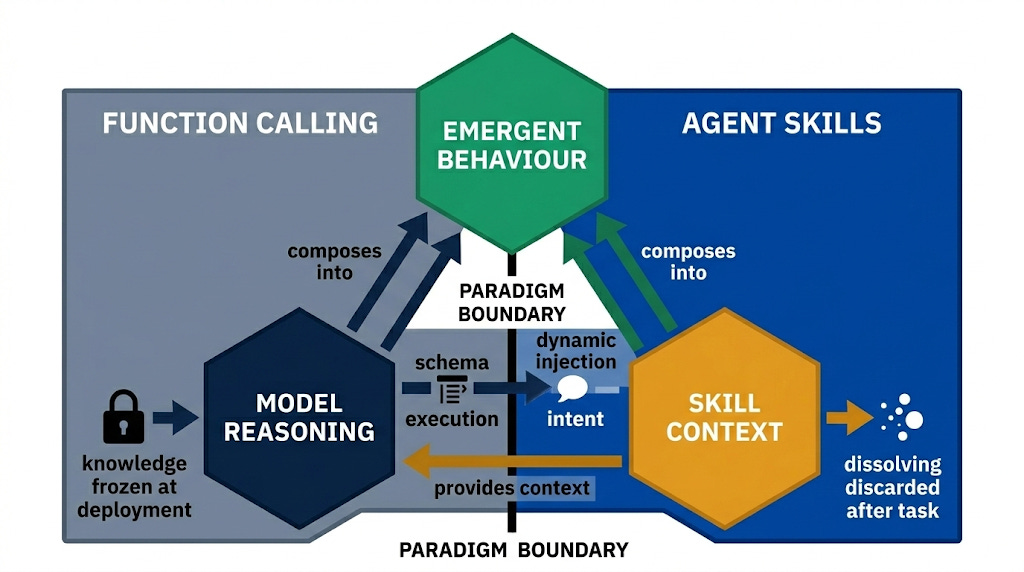

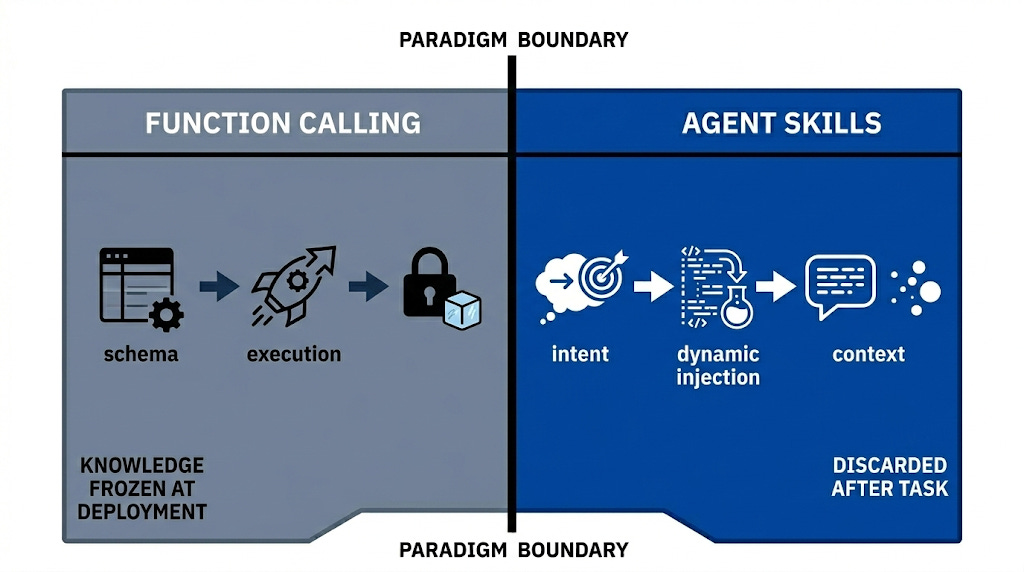

For much of the past decade, the dominant paradigm for extending AI system capabilities has been function calling, a pattern in which a model is provided with a schema of available functions, selects one based on user input, executes it, and returns a result.

This model, refined through successive generations of the OpenAI API and adopted across the industry, has the virtue of determinism. It has the corresponding limitation that specialised knowledge must be encoded either in the function’s logic or in a static system prompt, neither of which scales well across diverse domains. The knowledge is frozen at deployment.

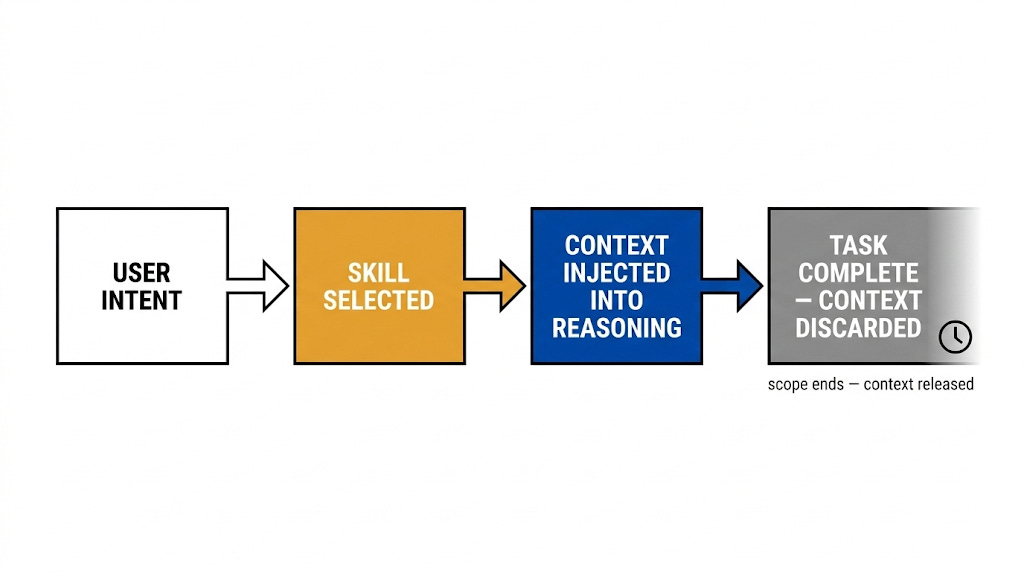

The Agent Skills architecture dissolves that constraint. Rather than treating specialised capability as executable logic, it treats it as prompt context, delivered at the moment of need, scoped to the task, and discarded once the interaction is complete.

Deconstructing the Meta-Tool

There are several architectural decisions that make this system work, each with significant implications for how AI capabilities are designed and deployed. The first is the decision to implement Skills through a meta-tool, a single tool named ‘Skill’ that acts as a container and dispatcher for all individual skills. The second is the mechanism by which skill selection occurs. The third, and most technically elegant, is the dual-message injection pattern.

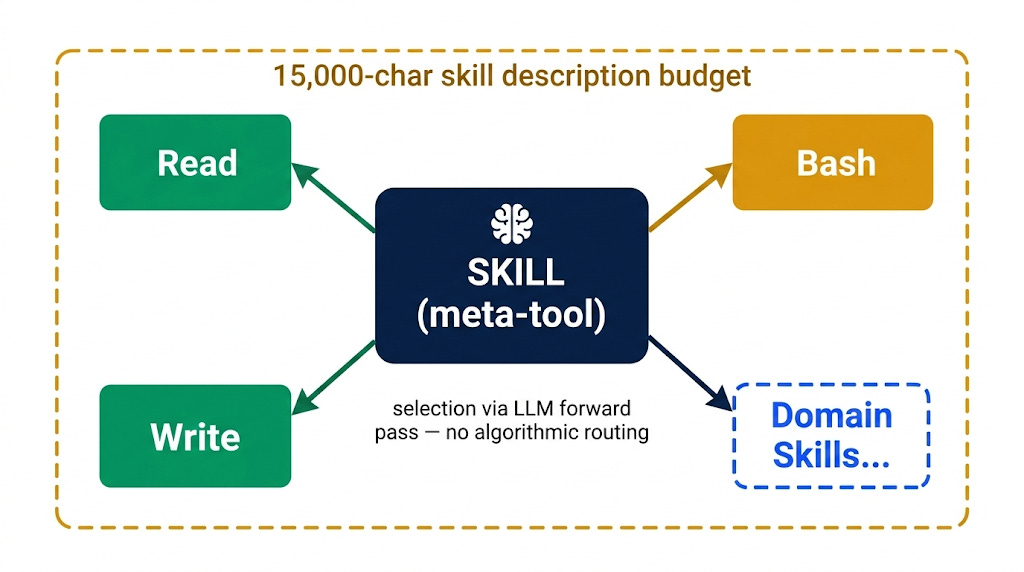

The meta-tool design means that individual skills do not appear in Claude’s tools array directly. Instead, a single Skill tool is listed alongside Read, Write, and Bash, and its description field contains a dynamically generated list of all available skills, their names and brief summaries, subject to a 15,000-character budget limit. Claude reads this list and uses its native language understanding to match user intent against skill descriptions. There is no algorithmic routing, no embedding-based semantic search, and no regex pattern matching at the application level. The selection happens entirely within the model’s forward pass through the transformer.

This is pure LLM reasoning applied to what is, structurally, a classification problem encoded as natural language.

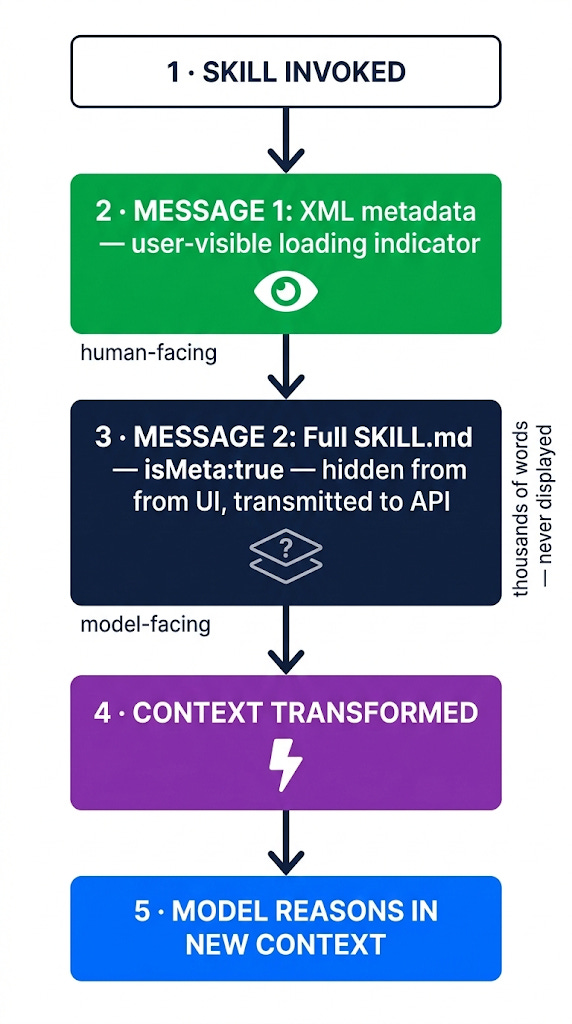

When a skill is selected and invoked, the system follows a sequence that departs radically from traditional tool execution. Rather than running code and returning a result, the Skill tool injects two user-role messages into the conversation history. The first contains concise XML metadata, a loading indicator visible to the user in the interface. The second contains the full skill prompt: potentially thousands of words of specialised instructions loaded from a SKILL.md file, set with an isMeta flag that hides it from the user interface while ensuring it is transmitted to the API. The model never runs the skill. The skill runs the model — by transforming the context in which the model reasons about the task.

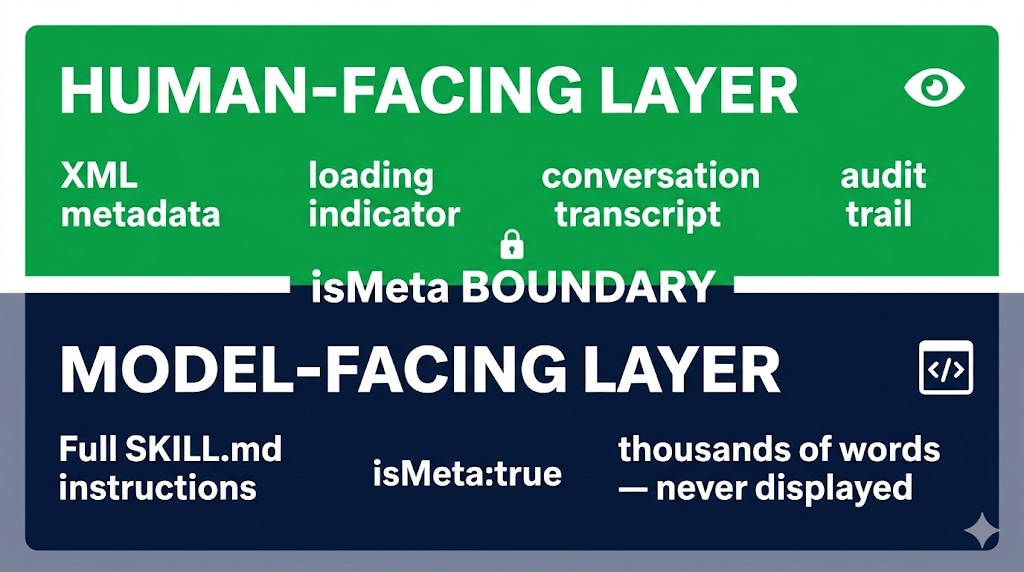

This dual-message architecture solves a genuine design problem. Users need transparency about which capabilities are active; Claude needs detailed instructions to execute specialist workflows. Collapsing both into a single message forces an impossible choice: either expose the full prompt in the interface, cluttering the transcript with thousands of words of internal AI instructions, or hide everything and eliminate the audit trail entirely. The isMeta flag enables a clean separation between human-facing communication and model-facing instruction that is elegant in its simplicity.

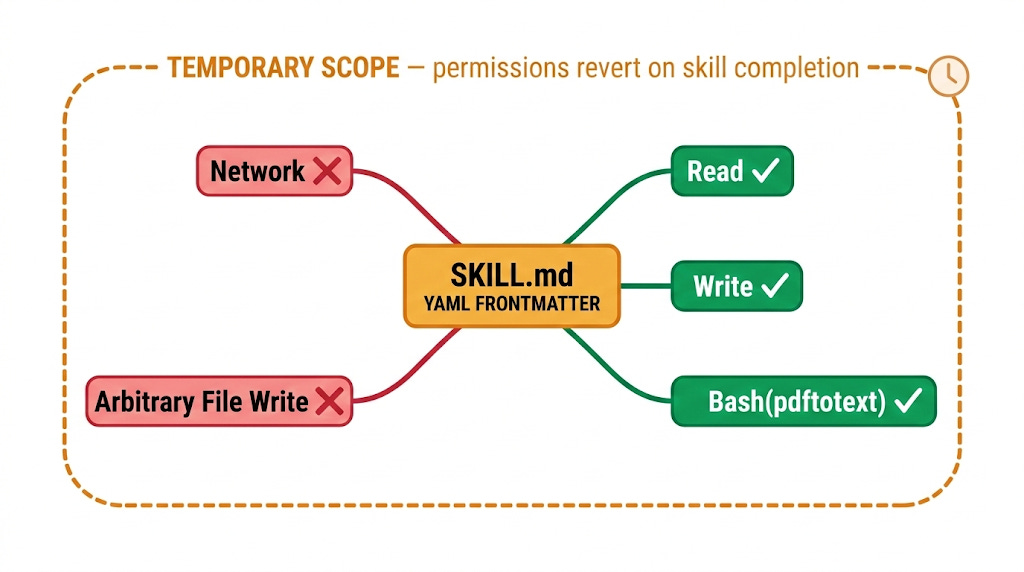

The execution context modification is the final piece. Skills specify, in their YAML frontmatter, which tools they are permitted to use Bash(pdftotext:*), Read, Write, or any subset of available operations. When the skill loads, a context modifier function pre-approves these tools for the duration of the interaction without requiring user confirmation for each invocation. The scope is temporary: once the skill completes, permissions revert. A PDF extraction skill cannot invoke network calls; a code analysis skill cannot write to arbitrary file paths. The surface area of each skill’s operation is precisely constrained by its author.

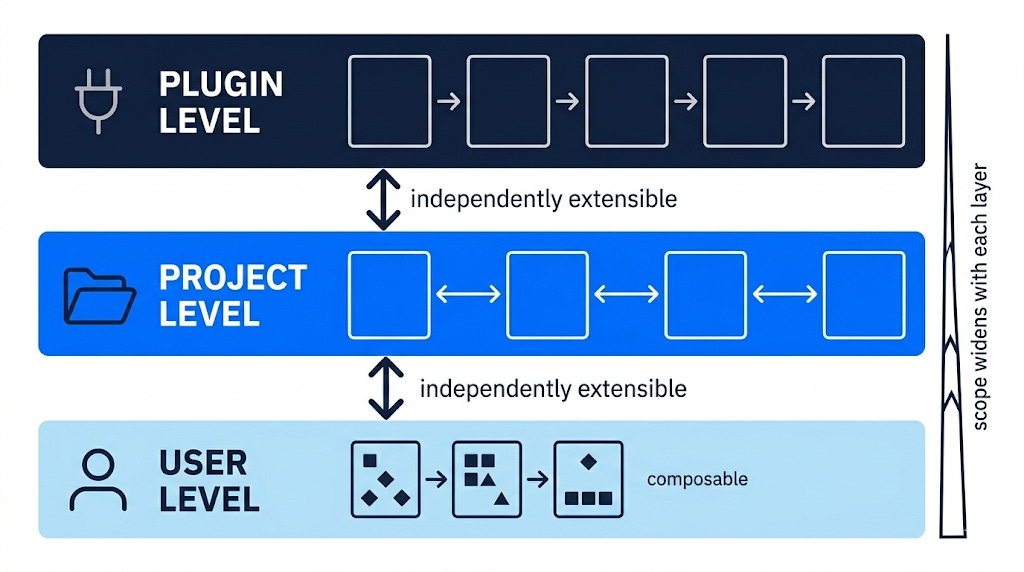

The implications of this design are more consequential than they might initially appear. Anthropic has created a system in which specialised knowledge, domain expertise, workflow logic, tool permission schemas, can be packaged, distributed, and loaded without modifying the underlying model or its system prompt. Skills can be installed at the user level, the project level, or through plugins, and can be extended or replaced independently of one another.

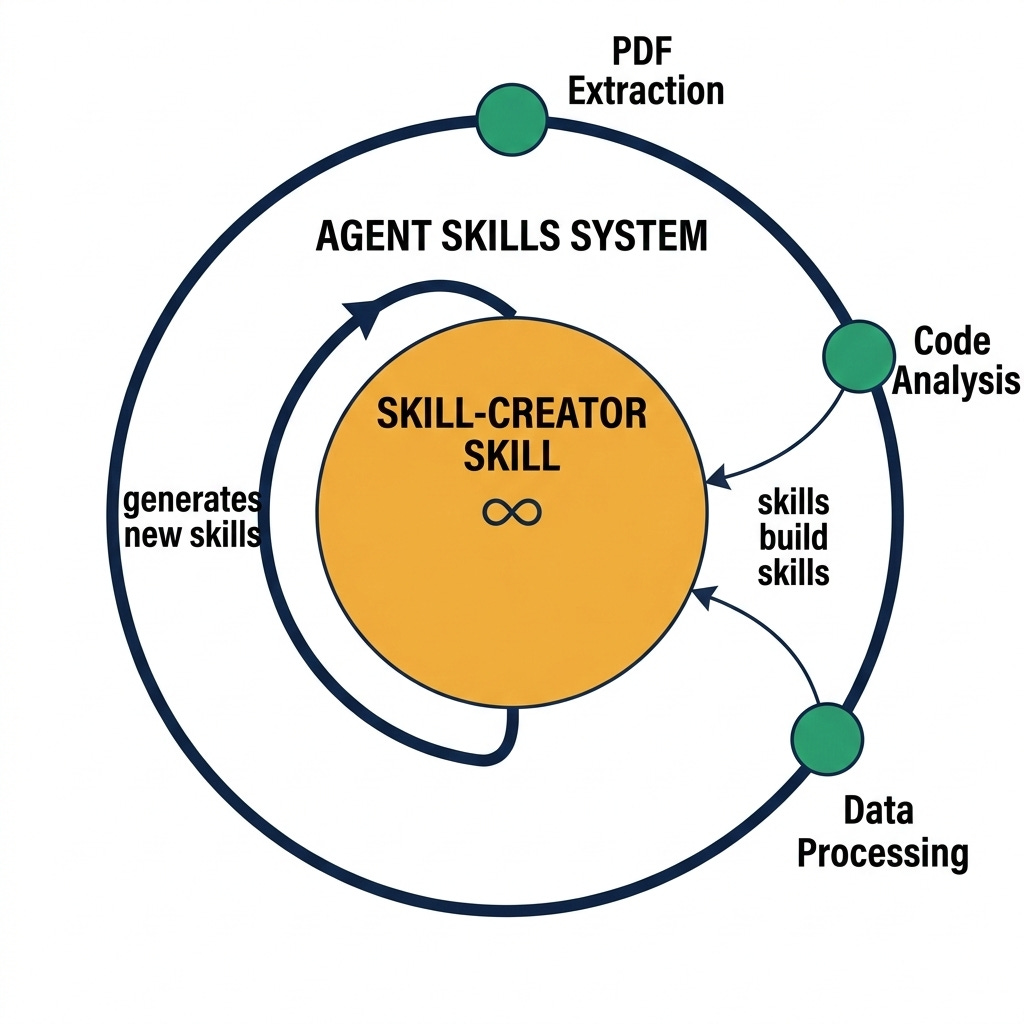

The composability is genuine: a skill-creator skill exists precisely to help users build new skills, encoding the pattern recursively within the system itself.

This modularity comes with tradeoffs that the current architecture does not fully resolve. Skills are not concurrency-safe, meaning that a skill which modifies execution context in a long-running interaction could produce unexpected behaviour if interrupted or overlapped. The 15,000-character budget for the skills list creates a natural ceiling on how many skills can be made simultaneously discoverable to the model, which will become a meaningful constraint as skill libraries grow. And because skill selection relies entirely on LLM reasoning rather than deterministic routing, the quality of skill descriptions becomes a significant variable in system reliability, a poorly worded description can cause an appropriate skill to be bypassed in favour of a less suitable one.

These are tractable engineering problems, not fundamental limitations of the approach. The more significant observation is that the Agent Skills architecture represents a proof of concept for something the AI industry has been converging toward without quite naming: the separation of model capability from model knowledge. The model provides reasoning; the skill provides context; the composition produces behaviour that neither could generate alone. As AI systems move from monolithic deployment toward modular, composable architectures, the design principles embedded in Claude’s Skills system, prompt templates as capability units, dynamic context injection, scoped execution permissions, are likely to influence how the field builds next-generation agent frameworks.

Phil